|

Therefore, I’ve divided it into 5 parts. Create a macvlan networkThe whole subject was way too long for a single article. If your application can work using a bridge (on a single Docker host) or overlay (to communicate across multiple Docker hosts), these solutions may be better in the long term. K3s includes: Flannel : a very simple L2 overlay network that satisfies the.I hacked another thing together, this time in order to install a highly available Docker Swarm cluster on CoreOS (yeah, Container Linux), using Ansible.Your networking equipment needs to be able to handle promiscuous mode, where one physical interface can be assigned multiple MAC addresses. K3s is the lightweight Kubernetes distribution by. Docker engine has DNS / LB capability too, where name resolution works for services in same overlay network (try to ping DB container from UI without IP address using serivce-name of DB swarm service) and there is built-in support for load balancing across service replicas via virtual IPs (you can lookup VIP using docker service inspect.

You can automate the port-mapping, but things start to get kinda complex when following this model.”That’s why Kubernetes chose simplicity and skipped the dynamic port-allocation deal. What if your application needs to advertise its own IP address to a container that is hosted on another node? It doesn’t actually knows its real IP, since his local IP is getting translated into another IP and a port on the host machine. This means that you need to assign a port on the host machine to each container, and then somehow forward all traffic on that port to that container. You can automate the port-mapping, but things start to get kinda complex when following this model.”That’s why Kubernetes chose simplicity and skipped the dynamic port-allocation deal. What if your application needs to advertise its own IP address to a container that is hosted on another node? It doesn’t actually knows its real IP, since his local IP is getting translated into another IP and a port on the host machine. This means that you need to assign a port on the host machine to each container, and then somehow forward all traffic on that port to that container.

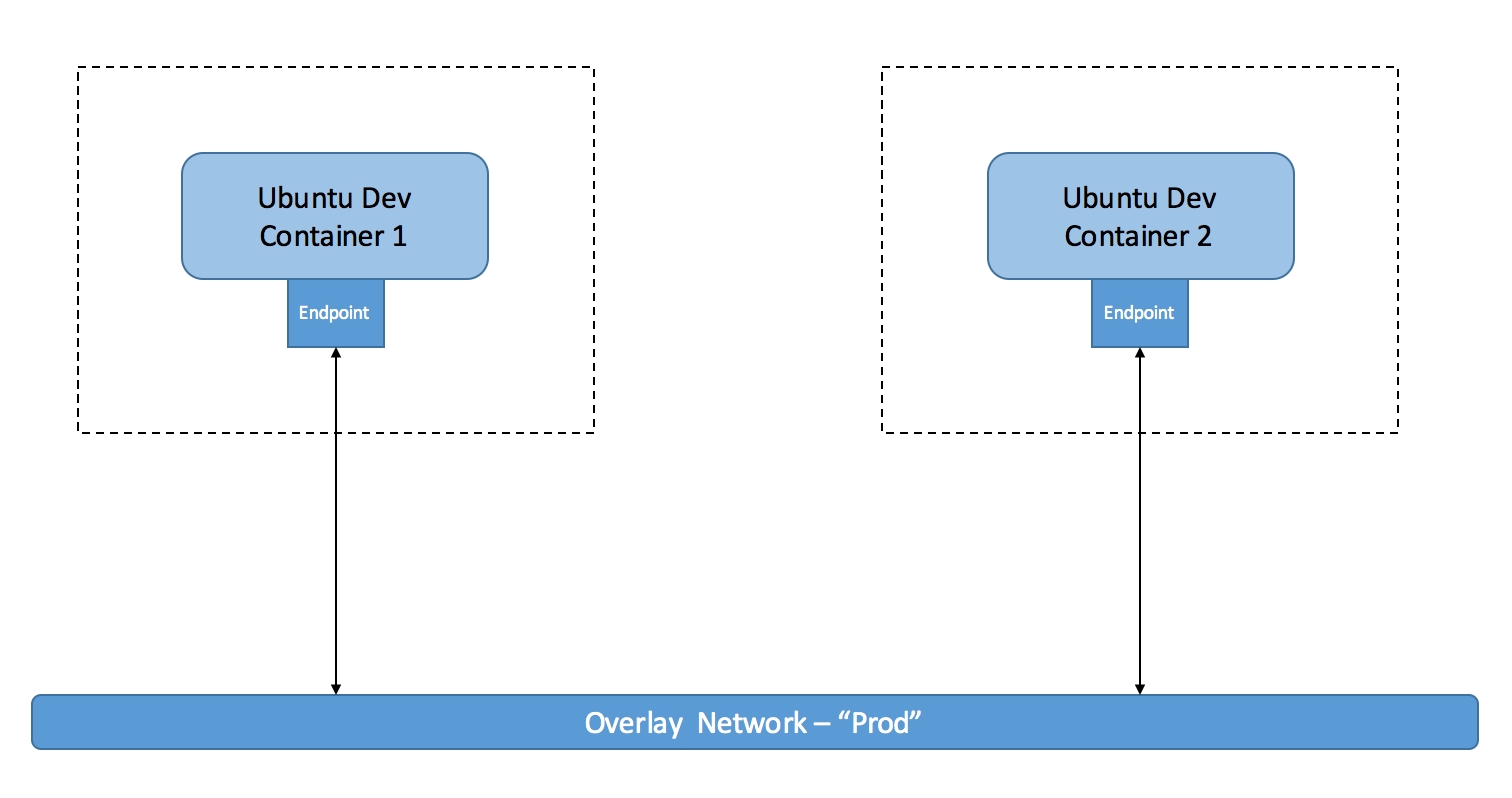

Primarily, the most used tools are Linux bridges, network namespaces, virtual ethernet devices and iptables.A Linux bridge is a virtual implementation of a physical switch inside of the Linux kernel. This is fairly cool, since the existing Linux networking features are pretty mature and robust already.In order to provide its networking, Docker uses numerous Linux networking tools as building blocks to handle all of its forwarding, segmentation and management needs. For this, they relied heavily on the existing Linux kernel’s networking stack. They just went nuts and decided to go crazy on the dynamic port-forwarding part. Docker uses a different standard, called the Container Network Model (CNM) which is implemented by Docker’s libnetwork. Give me some CNM!I talked to you about the Container Network Interface (CNI) when talking about Kubernetes on a previous article. The native Docker Drivers use iptables in heavy amounts in order to do network segmentation, port mapping, mark traffic and load balance packets.Now that you know all that, let’s talk about models. When a container is attached to a Docker Network, one end of the veth is placed inside the container under the name of ethx, and the other is attached to the Docker Network.Iptables is a package filtering system, which acts as a layer 3/4 firewall and provide packet marking, masquerading and dropping features. They have a single interface in each namespace. Network namespaces are used to provide isolation between processes, analog to regular namespaces They ensure that two containers, even if they are on the same host, won’t be able to communicate with each other unless explicitly configured to do so.Virtual ethernet devices or veth are interface that act as connections between two network namespaces. Docker Overlay Network Driver To AIt is configured through the NetworkController. It also binds a specific driver to a network.The Driver object, not directly visible to the user, makes Networks work in the end. A Network consists of many endpoints.Each one of these components has an associated CNM object on libnetwork and a couple of other abstractions that allow the whole thing to work together nicely.The NetworkController object exposes an API entrypoint to libnetwork, which users (like the Docker Engine) use in order to allocate and manage Networks. It gives connectivity to the services that are exposed in a Network by a container.The Network is a collection of Endpoints that are able to talk to each other. An Endpoint can belong to only one Network, and one Sandbox. A Sandbox may contain endpoints from multiple networks.The Endpoint connects a Sandbox to a Network. Mit app inventor emulator not connecting macA Network object provides an API to create and manage Endpoints. This provided connectivity can span many hosts, therefore, the Network object has a global scope within a cluster.The Endpoint object is basically a Service Endpoint. The Driver will then connect Endpoints that belong to the same Network, and isolate those who belong to different ones. The corresponding Driver object will be notified upon its creation, or modification. It is created using the NetworkController. Basically the Driver owns a network and handles all of its management.The Network object is an implementation of the Network component. It is created when a user requests an Endpoint creation on a Network. They are global to the cluster as well, since they represent a Service rather than a particular container.The Sandbox object, much like the component described above, represents the configuration of the container’s network stack, such as interface management, IP and MAC addresses, routing tables and DNS settings. It provides connectivity from and to Services provided by other containers in the Network. It uses both local Linux bridges and VXLAN to overlay inter-container communication over physical networks.The MACVLAN driver uses the MACVLAN bridge mode to establish connections between container interfaces and parent host interfaces. By default, all containers created on the same bridge can talk to each other.The Overlay driver creates an overlay network that may span over multiple Docker hosts. Native Drivers don’t require any extra modules and are included in the Docker Engine by default.Native Drivers include: Host, Bridge, Overlay, MACVLAN and None.When using the Host driver, the container uses the Host’s network stack, without any namespace separation, and while sharing all of the host’s interfaces.The Bridge driver creates a Docker-managed Linux bridge on the Docker host. Its scope is local, since it is associated to a particular container on a given host.As I said earlier, there are two basic type of Drivers: native and remote. A Sandbox object may have multiple Endpoints, and therefore, may be connected to multiple Networks. We will talk about overlay networks, since they hold a “swarm” scope, which means that they have the same Network ID through the cluster, and which is what we wanted to explain in the first place. I won’t be talking about these since we will not be using them.Different networks drivers have different scopes. The Remote Drivers that are compatible with CNM are contiv , weave , calico (which we used on our Kubernetes deployment!) and kuryr. Therefore, it stays isolated from every other Network, and even its own host’s network stack.Remote drivers are created either by vendors or the community. Both the intervals of transmission and the size of the peering groups are fixed, which helps keeping the network usage in check. It is scoped by Network, which is quite cool since it dramatically reduces the amount of updates a host receives.It is built upon many components that work together in order to achieve fast convergence, even in large scale clusters and networks.Messages are passed in a peer-to-peer fashion, expanding the information in each exchange to an even larger group of nodes. It uses a gossip protocol to propagate all the aforementioned information. It manages the state of Docker Networks within a Swarm cluster, while also propagating control-plane data. It uses standard VXLAN to encapsulate container traffic and send it to other containers. Also, topology-aware algorithms are used in order to optimise peering groups using relative latency as criteria.Overlay networks rely heavily on this Network Control Plane. Full state syncs are done often, in order to achieve consistency faster and fix network partitions.

0 Comments

Leave a Reply. |

AuthorDavid ArchivesCategories |

RSS Feed

RSS Feed